|

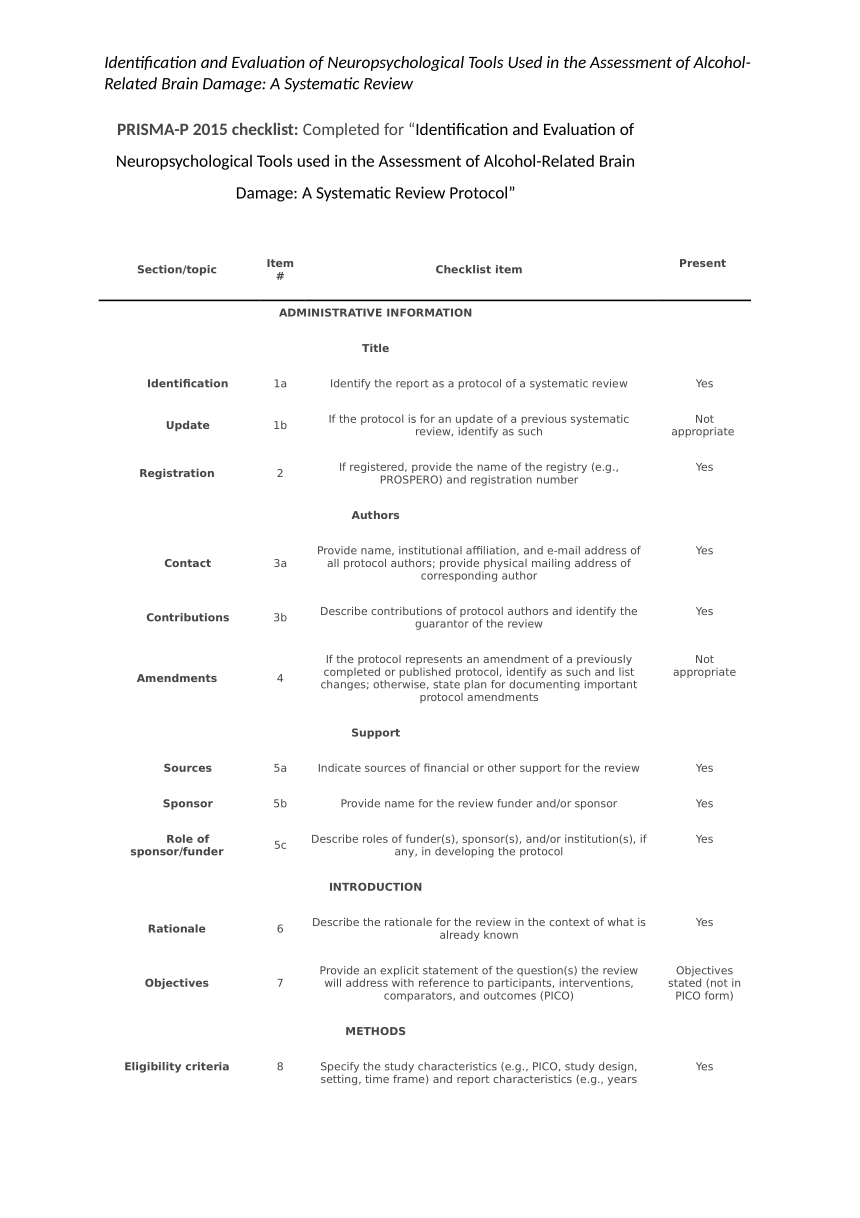

Critical appraisal tools Understanding Health Research can help you to critically appraise research. However, other critical appraisal tools are available, focusing on different audiences or types of research. CASP provides resources and learning and development opportunities to support critical appraisal skills development in the UK. The Centre for Evidence Based Medicine produces worksheets to assist with critical appraisal of specific types of research including systematic reviews and Reporting guidelines We can only assess the relevance and quality of research if we have sufficient information about its and Reporting guidelines help researchers to thoroughly describe all the important details about their studies. We can use reporting guidelines to check how complete an article is, to see whether it is worth critically appraising it further. We can then use critical appraisal checklist questions to assess how good the described are and what the actually mean. Some internationally-recognised reporting guidelines include: The CONSORT Group have developed tools aimed at researchers to help them to report their randomised in a full and comprehensive manner.

If you are interested in appraising a paper that is a you could check what the authors have reported against the CONSORT 2010 Checklist to see if the researchers have failed to mention anything important. STROBE is a similar organisation to CONSORT. They produce checklists for researchers to use to make sure that they report their well. If you are interested in appraising a case-control or cross-sectional study, you could check the content of the paper against the corresponding to see if the researchers have failed to mention anything important. A for checking that interview and focus group research has been reported well. Many other reporting guidelines are listed on the to support better reporting of research studies.

Critical Appraisal tools Critical appraisal is the systematic evaluation of clinical research papers in order to establish:. Does this study address a?. Did the study use valid methods to address this question?. Are the valid results of this study important?.

Critical Appraisal Of Research

Are these valid, important results applicable to my patient or population? If the answer to any of these questions is “no”, you can save yourself the trouble of reading the rest of it. This section contains useful tools and downloads for the critical appraisal of different types of medical evidence. Example appraisal sheets are provided together with several helpful examples.

AMSTAR - Assessing the Methodological Quality of Systematic Reviews You are viewing as a guest Tool Name Tool Description Link to article/ tool PDF Validation Process The TREND (Transparent Reporting of Evaluations with Nonrandomized Designs) Statement A 22-item checklist developed to guide standardized reporting of non randomized controlled trials. It complements the CONSORT statement which was developed for RCTs. Des Jarlais, D. C., Lyles, C., Crepaz, N., &TRENDGroup.

Improving the reporting quality of nonrandomized evaluations of behavioral and public health interventions: TheTRENDstatement. American Journal of Public Health, 94, 361-366.

Has information on Information on validated instruments such as psychometric and biometric properties but does not state if the TREND statement is validated itself Newcastle-Ottawa Scale Developed to assess the quality of nonrandomised studies with its design, content and ease of use directed to the task of incorporating the quality assessments in the interpretation of meta-analytic results. It allocates a maximum of nine stars, for quality of selection, comparability, exposure and outcome of study participants. Wells G, Shea B, O’Connell D, et al. The Newcastle-Ottawa Scale (NOS) for assessing the quality of nonrandomized studies in meta-analysis.

The authors of NOS state that the validity assessment of the scale is under development. Quality Assessment Tool For Quantitative Studies The Effective Public Health Practice Project This tool was developed by The Effective Public Health Practice Project for use in public health, and can be applied to articles of any public health topic area. It consists of seven steps. Effective Public Health Practice Project Quality Assessment Tool 2003. The validation process involved assessing the instrument's content and categories for clarity, completeness and relevance, and an overall comparison with similar types of tools. Checklist for Measuring Quality- Downs and Black Provides both an overall score for study quality and a numeric score out of a possible 30 points.

It has five sections. Administration of the tool can happen either within a systematic review process, or as a quality assessment tool for individual articles. Downs SH, Black N.

The feasibility of creating a checklist for the assessment of the methodological quality both of randomised and non-randomised studies of health care interventions. J Epidemiol Community Health 1998; 52:377–84.

The checklist was revised and tested by comparing the Quality Index (total score) with the total score obtained using an existing validated checklist ( Standards of Reporting Trials Group. A proposal for structured reporting of randomised controlled trails. JAMA 1994; 272:1926–31.) (Graphic Appraisal Tool for Epidemiology) A visual framework that illustrates the generic design of all epidemiologic studies. Jackson R, Ameratunga S, Broad J, Connor J, Lethaby A, Robb G, et al. The GATE frame: critical appraisal with pictures. Evid Based Med 2006;11: 35-8.

(pdf) Not Validated CriSTal Checklist Evaluates the quality of various research designs, including appraising a user study or appraising information needs analysis. Checklist for Appraising an Information Needs analysis: Checklist for Appraising a User Study: Not stated ( Readers guide to the Literature on Interventions Addressing the Need for education and training ) Aimed at appraising published reports of educational and training interventions.

Koufogiannakis, D., Booth, A. & Brettle, A. ReLIANT: Readers’s guide to the Literature on Interventions Addressing the Need for education and Training. Library and Information Research 2006, 30, 44–51.

(pdf) Not stated The EBLIP checklist for library research provides a thorough, generic list of questions that one would ask when attempting to determine the validity, applicability and appropriateness of a study. Eleven steps to EBLIP service. Health information and libraries journal, 2009, vol. Not stated Represents a sequence of steps in the assessment of general practice literature. It involves the evaluation of the article using a scoring system where at the end of the article, the reader decides what to do with it Domhall M, READER: an acronym to aid critical reading by general practitioners. British Journal of General Practice, 1994, 44, 83-85. Not stated Consists of a 25 item checklist and flow diagram that helps to determine the accuracy and completeness of reporting of studies of diagnostic accuracy.

Bossuyt PM, Reitsma JB, Bruns DE, Gatsonis CA, Glasziou PP, Irwig LM, et al. The STARD Statement for reporting studies of diagnostic accuracy: explanation and elaboration. Clin Chem 2003; 49:7–18. Not stated A valid instrument consisting of 12 items designed to assess the methodological quality of non-randomized surgical studies. The first eight items are specifically for non-comparative studies.

Slim K, Nini E, Forestier D, Kwiatkowski F, Panis Y, Chipponi J. Methodological index for non-randomized studies (minors): development and validation of a new instrument. Aust NZ J Surg.

2003; 73:712–716. Followed the principles of scale construction outlined by: Bland JM, Altman DG. Validating scales and indexes.

BMJ 2002; 324: 606–7. Evaluates narrative, opinion and others textual evidence. However to use this tool a login is required. To use this tool registration with JBI is requires Not stated Modified set of Russell and Gregory’s criteria for methodological soundness A nine item checklist separated into three themes (Finding validity, description and application) Russell C, Gregory D. Evaluation of qualitative research studies.

Evid Based Nurs. 2003; 6:36-40.

Not stated Quality of Reporting of Observational Longitudinal Research Comprises of a 33 item criteria and a flow diagram adapted from CONSORT. The criteria represent two principal categories: 1) aspects that could possibly influence effect estimates and 2) more descriptive or contextual elements Tooth L, Ware R, Bain C, Purdie DM, and Dobson A. Quality of reporting of observational longitudinal research. Am J Epidemiol.

2005; 161(3):280-288. Two raters independently tested the final checklist on a random selection of articles describing observational longitudinal research GRADE system Classifies the quality of evidence in one of four levels-high, moderate, low, and very low. The GRADE system offers two grades of recommendations: “strong” and “weak” Guyatt GH, Oxman AD, Vist GE, Kunz R, Falck-Ytter Y, Alonso-Coello P, et al. GRADE: an emerging consensus on rating quality of evidence and strength of recommendations. BMJ 2008; 336:924-6.

Not stated Agency for Healthcare Research and Quality (AHRQ) Evidence-based Practice Center program Panel of 5-8 experts from 13 chosen centers convene and develop a topic's key questions, provide advice on which types of studies to include or exclude, and suggest other analyses that may be useful. Atkins D, Fink K, Slutsky J. Better information for better health care: the Evidence-based Practice Center program and the Agency for Healthcare Research and Quality. Ann Intern Med 2005 Jun 21; 142(12 Pt 2):1035-1041. The Agency elected to emphasize broad, general expertise among the EPCs rather than establishing centers specializing in a single content area, such as cardiology.

This EPC is now examining whether scores calculated by using this instrument are associated with reported adverse event rates in other surgical and nonsurgical case series Pengel scale Specific to prospective studies. Six criteria are used to assess methodological quality. Pengel LHM, Herbert RD, Maher CG, Refshauge KM. Acute low back pain: systematic review of its prognosis.

2003; 327(7410):323. Not stated Modified methodological quality assessment tool which was developed and based on existing assessment tools Consists of 2 checklists, one for studies of incidence or prevalence and another for risk factors. It has 6 items each that assess external validity. For internal validity, the checklist of incidence has 5 items and the risk factor checklist has 13 items Shamliyan T. Kane RL, Ansari MT, Raman G, Berkman ND, Grant M, Janes G, Magilione M, Moher D, Nasser M, Robinson KA, Segal JB, Tsouros S. Criteria to assess quality of observational studies evaluating the incidence, prevalence and risk factors of chronic diseases.

Conducted a pilot test of the checklists. The experts each evaluated 10 articles to test reliability and discriminant validity. Examined discriminant validity by testing the hypothesis that our checklists can discriminate quality across studies and discriminate reporting vs.

Methodological quality Loney criteria for critical appraisal of research articles on prevalence of disease Used by health professionals to critically appraise research articles that estimate the prevalence or incidence of a disease or health problem. Loney PL, Chambers LW, Bennett KJ, Roberts JG, Stratford PW.

Critical appraisal of the health research literature: prevalence or incidence of a health problem. Chronic Dis Can 1998; 19(4): 170–176. Not stated Quality Assessment for Diagnostic Accuracy Studies (QUADAS) Assesses the quality of diagnostic accuracy studies included in systematic reviews Whiting P, Rutjes AW, Reitsma JB, et al. The development of QUADAS: a tool for the quality assessment of studies of diagnostic accuracy included in systematic reviews. BMC Med Res Methodol 2003; 3:25. Validation process includes piloting the tool on a small sample of published studies, focusing on the assessment of the consistency and reliability of the tool.

It is also piloted in a number of diagnostic reviews. Regression analyses are used to investigate associations between study characteristics and estimates of diagnostic accuracy in primary studies. QUADAS-2 QUADAS-2 is based on user feedback from the initial tool developed in 2003. It is made up of four domains namely and applied in 4 phases Whiting P, Rutjes AWS, Westwood ME et al (2011) QUADAS-2: a revised tool for the quality assessment of diagnostic accuracy studies. Ann Intern Med 155:529–536 Not stated STROBE Checklist (Strengthening the reporting of observational studies in epidemiology) Consists of a checklist of 22 items, which relate to the title, abstract, introduction, methods, results, and discussion sections of articles. Eighteen items are common to cohort studies, case–control studies, and cross-sectional studies, and 4 are specific to each of the 3 study designs.

The strengthening the reporting of observational studies in epidemiology (STROBE) statement: guidelines for reporting observational studies. Lancet 370, 1453–1457 (2007). The generalisability (external validity) of the study results was discussed RoBANS (Risk of Bias Assessment tool for Non-randomized Studies) Contains 6 domains with scores of 'low', 'high' or 'unclear'.

It is harmonized with the Cochrane’s RoB tool and GRADE, and can be incorporated into RevMan and GRADEpro. States it is Validated Cochrane Risk of Bias tool for non-randomized studies An extension of the current Cohrane risk of bias tool. Not stated A new form of literature review has emerged, Mixed Studies Review. These Assesses Qualitative (6 items), Quantitative experimental (3 items), Quantitative observational (3 items) and Mixed Methods (3 items).,. A scoring system for appraising mixed methods research, and concomitantly appraising qualitative, quantitative and mixed methods primary studies in Mixed Studies Reviews. 2009 Apr; 46 (4):529-46.

Not Validated Consists of 14 questions and can be used either as a checklist or a total score. It provides clinicians with a quick way of appraising the quality of a clinical guideline. Not stated The Appraisal of Guidelines for Research & Evaluation ( AGREE II ) Instrument Consists of 23 items. It assesses the quality of the guidelines, provides a methodological strategy for the development of guidelines; and aims to inform what information and how information ought to be reported in guidelines.

AGREE Collaboration. Development and validation of an international appraisal instrument for assessing the quality of clinical practice guidelines: the AGREE project. Qual Saf Health Care.

2003 Feb; 12(1):18-23. Face, construct and criterion validity were measured. Attitudes about the instrument and user guide were collected by questionnaire. Assessments of criterion validity were assessed by calculating the Kendall’s tau B rank correlation coefficients between the appraisers’ domains scores and the overall assessment scores Critical Appraisal Skills Programme (CASP) Critical Appraisal Skills Program (CASP): Cohort Studies is a methodological checklist which provides key criteria relevant to cohort studies CASP, NHS. Critical Appraisal Skills Programme (CASP): appraisal tools. Public Health Resource Unit, NHS 2003.

Not stated Critical Appraisal Skills Program (CASP): Economic Evaluation Studies is a methodological checklist which provides key criteria relevant to economic studies. Drummond MF, Stoddart+ GL, Torrance GW. Methods for the economic evaluation of health care programmes.

Oxford: Oxford University Press, 1987. Not stated Critical Appraisal Skills Program (CASP): Diagnostic Test Studies is a methodological checklist which provides key criteria relevant to diagnostic studies. Jaesche R, Guyatt GH, Sackett DL, Users’ guides to the medical literature, VI. How to use an article about a diagnostic test. JAMA 1994; 271 (5): 389-391 Not stated Critical Appraisal Skills Program (CASP): Case Control Studies is a methodological checklist which provides key criteria relevant to case control studies. Not stated Critical Appraisal Skills Program (CASP): Qualitative Research is a methodological checklist which provides key criteria relevant to qualitative research studies.

(pdf) Not stated Critical Appraisal Skills Program (CASP): Systematic Reviews is a methodological checklist which provides key criteria relevant to systematic reviews. Oxman AD, Cook DJ, Guyatt GH, Users’ guides to the medical literature. How to use an overview. JAMA 1994; 272 (17): 1367-1371 Not stated Therapy Critical Appraisal Worksheet Therapy Critical Appraisal Worksheet is a methodological checklist which provides key criteria relevant to therapy studies. Straus SE, Richardson WS, Glasziou P, Haynes RB.

Evidence based medicine. How to practice and teach it.

Fourth Edition. Churchill Livingstone: Edinburgh, 2010.

Critical Appraisal Tool

0-702-03127-5, 312 pages Not stated Diagnostic Critical Appraisal Worksheet is a methodological checklist which provides key criteria relevant to diagnostic studies. Straus SE, Richardson WS, Glasziou P, Haynes RB. Evidence based medicine. How to practice and teach it. Fourth Edition.

Churchill Livingstone: Edinburgh, 2010. 0-702-03127-5, 312 pages Not stated Harm Critical Appraisal Worksheet is a methodological checklist which provides key criteria relevant to harm studies. Straus SE, Richardson WS, Glasziou P, Haynes RB.

Evidence based medicine. How to practice and teach it. Fourth Edition. Churchill Livingstone: Edinburgh, 2010.

0-702-03127-5, 312 pages Not stated Prognosis Critical Appraisal Worksheet is a methodological checklist which provides key criteria relevant to prognostic studies. Straus SE, Richardson WS, Glasziou P, Haynes RB. Evidence based medicine. How to practice and teach it. Fourth Edition.

Churchill Livingstone: Edinburgh, 2010. 0-702-03127-5, 312 pages Not stated This methodological checklist provides key criteria relevant to systematic reviews.

Straus SE, Richardson WS, Glasziou P, Haynes RB. Evidence based medicine.

How to practice and teach it. Fourth Edition. Churchill Livingstone: Edinburgh, 2010. 0-702-03127-5, 312 pages Not stated Scottish Intercollegiate Guidelines Network (SIGN) SIGN Checklist 1: Systematic Reviews and Meta-analyses Identifies the study, the reviewer, the guideline for which the paper is being considered as evidence, and the key question(s) it is expected to address, Relates to the overall assessment of the paper Scottish Intercollegiate Guidelines NetworkISBN 978 1 905813 81 0First published December 2011 Validity is based on the themes of credibility and accountabilityi.e.

The link between a set of guidelines and the scientific evidence must be explicit, and scientific and clinical evidence should take precedence over expert judgement. (Field 1990 ) All SIGN guidelines are considered for review three years after publication. ( SIGN 50 handbook) SIGN checklist 3: Cohort Studies Designed to answer questions of the type “What are the effects of this exposure?”, It relates to studies that compare a group of people with a particular exposure with another group who either have not had the exposure, or have a different level of exposure.

Scottish Intercollegiate Guidelines NetworkISBN 978 1 905813 81 0First published December 2011 SIGN Checklist 4: Case-control Studies Assesses studies that are generally used to assess the causes of a new problem, but may also be useful for the evaluation of population based interventions such as screening. Scottish Intercollegiate Guidelines NetworkISBN 978 1 905813 81 0First published December 2011 SIGN Checklist 5: Diagnostic Studies It has 3 sections. It identifies the study and makes a series of statements that are used to assess the internal validity of the study and rates the methodological quality of the study, based on the responses in the first section Scottish Intercollegiate Guidelines NetworkISBN 978 1 905813 81 0First published December 2011 McMaster Critical Review Form McMaster Critical Review Form for Qualitative studies contains a generic quantitative appraisal tool, accompanied by detailed guidelines for usage. Law M, Stewart D, Pollock N, et al.

Guidelines for Critical Review Form: Quantitative Studies. Hamilton, Ontario: McMaster University Publications, 1999:1–11. Not stated McMaster Critical Review Form for Qualitative studies contains a generic quantitative appraisal tool, accompanied by detailed guidelines for usage.

Letts, L., Wilkins, S., Law, M., Stewart, D., Bosch, J., & Westmorland, M. Critical Review Form—Qualitative Studies (Version 2.0). Retrieved March 21, 2008 Not stated Evaluation tool The evaluation tool for mixed studies allows appraisal of both the qualitative data collection and analysis component and the wider quantitative research design. It is applicable where the aim of the qualitative component is to draw out the informants' understandings and perceptions Long AF, Godfrey M, Randall T, Brettle AJ and Grant MJ (2002) Developing Evidence Based Social Care Policy and Practice. Part 3: Feasibility of Undertaking Systematic Reviews in Social Care. Leeds: Nuffield Institute for Health (htm) Not stated The Evaluation Tool for Quantitative Studies contains six sub-sections: study evaluative overview; study, setting and sample; ethics; group comparability and outcome measurement; policy and practice implications; and other comments. & Godfrey, M.

Critical Appraisal Pdf

(2004), ‘‘An evaluation tool to assess the quality of qualitative research Studies’’. International Journal of Social Research Methodology, Vol. Not stated Download Copy Copyright © 2017 AMSTAR All Rights Reserved.

Critical Appraisal Critical appraisal is the process of carefully and systematically assessing the outcome of scientific research (evidence) to judge its trustworthiness, value and relevance in a particular context. Critical appraisal looks at the way a study is conducted and examines factors such as internal validity, generalizability and relevance. Some initial appraisal questions you could ask are: 1. Is the evidence from a known, reputable source?

Has the evidence been evaluated in any way? If so, how and by whom? How up-to-date is the evidence? S econd, you could look at the study itself and ask the following general appraisal questions: 1. How was the outcome measured? Is that a reliable way to measure?

How large was the effect size? What implications does the study have for your practice? Is it relevant? Can the results be applied to your organization?

Questionnaires If you would like to critically appraise a study, we strongly recommend using the app we have developped for Iphone and Android: You could also consider using the following appraisal questionnaires (checklists) for specific study designs, but we do not recommend this. ) Copyright © 2018 Center for Evidence-Based Management - All Rights Reserved Powered by &.

Relevance for Public Health The Critical Appraisal Skills Programme (CASP) tools can be used to teach critical appraisal skills in a wide variety of settings, including public health. To learn more about using the CASP tools to improve public health practice, see the NCCMT's. For example, the Critical Appraisal Skills Programme (CASP) tools can be used to appraise and summarize the evidence on bullying prevention among children and youth to inform local programming. Description The (CASP) helped to develop an evidence-based approach in health and social care, working with local, national and international groups. CASP aims to help individuals develop skills to find and make sense of research evidence, helping them to apply evidence in practice. The Critical Appraisal Skills Programme (CASP) tools were developed to teach people how to critically appraise different types of evidence. There are seven checklists specifically designed to appraise:.

Systematic Reviews. Randomized Controlled Trials (RCTs). Qualitative Research. Economic Evaluation Studies. Cohort Studies.

Case Control Studies. Diagnostic Test Studies All critical appraisal tools consist of three sections to assess internal validity, the results and the relevance to practice. The CASP appraisal tools were developed from guides produced by the Evidence Based Medicine Working Group published in the Journal of the American Medical Association.

Implementing the Method/Tool Steps for Using Method/Tool Each Critical Appraisal Skills Programme (CASP) appraisal tool asks three broad questions:. Is the study valid?. What are the results?.

Will the results help locally? Each of the seven appraisal tools includes 10–12 questions. The first two questions are screening questions; if the answer is yes to both, it is worth proceeding with the remaining questions to assess the study. Prompts are given with each question to remind the user why the question is important.

There are seven critical appraisal tools to assess:. Systematic Reviews. Randomized Controlled Trials (RCTs). Qualitative Research. Economic Evaluation Studies. Cohort Studies.

Case Control Studies. Diagnostic Test Studies Who is involved Any individual interested in learning how to critically appraise research evidence could use the CASP tools. Conditions for Use Not specified Evaluation and Measurement Characteristics Evaluation Has been evaluated. The tools were pilot tested in workshops, including feedback and review of materials, using successively broader audiences.

Thus the CASP tools are suitable for a wide target audience in service administration and health care delivery. Validity Not applicable Reliability Not applicable Methodological Rating Not applicable Method/Tool Development Developer(s) The Critical Appraisal Skills Programme Website: Method of Development The CASP checklists were developed using a four-stage process:. A multidisciplinary working group and CASP secretariat drafted written materials. The working group tested the critical appraisal tool and modified it as needed.

The tool was piloted with a knowledgeable audience and further modified. Non-expert health professionals used the tool. The members of the multidisciplinary working groups had backgrounds in public health, epidemiology or evidence-based practice. Release Date 2006 Contact Person/Source Amanda Burls Critical Appraisal Skills Programme (CASP) Email: [email protected] Resources.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Home

- Pdf Extract Tiff 2.0 Serial

- Osmonitor Full Crack

- Pinnacle Studio 9 Activation Key Serial

- Microsoft Office Home And Student 2017 Product Key

- Stalingrad Full Movie Torrent

- Appointment With Death (hercule Poirot Mystery)

- Smtp Relay Software

- Ground Control 2 Patch 1.0.0.6

- Trial Adobe Dreamweaver

- Igt Sas Protocol Manual

- California Driver S License Number Lookup

- Garmin Unlock Generator V1 5

- Eurowin Solution Keygen

- Ks 2000 Serial Cable

- Pnach Files For Pcsx2 Emulator

RSS Feed

RSS Feed